Hi ,

In this post i'll compare the two join

components: the merge join and the lookup join with the relation join in order

to determine which solutions is the best possible way to solve your problem

regarding joining data from certain data sources. This post is based on SQL Server

2008 R2.

Merge Join

The merge join is used for joining data from two or more datasources. The more common ETL scenarios will require you to access two or more disparate datasources simultaneously and merge their results together into a single datasource. An example could be a normalized source system and you want to merge the normalized tables into a denormalized table.

The merge join in SSIS allows you to perform an inner or outer join in a streaming fashion. This component behaves in a synchronous way. The component accepts two sorted input streams, and outputs a single stream, that combines the chosen columns into a single structure. It’s not possible to configure a separate non-matched output.

Lookup

The lookup component in SQL Server Integration Services allows you to perform the equivalent of relational inner and outer hash joins. It’s not using the algorithms stored in the database engine. You would use this component within the context of an integration process, such as a ETL Layer that populates a datawarehouse from multiple non equal source systems.

The transform is written to behave in a synchronous manner in that it does not block the pipeline while it’s doing its work, mostly. In certain cache modes the component will initially block the package’s execution for a period of time.

Merge Join

The merge join is used for joining data from two or more datasources. The more common ETL scenarios will require you to access two or more disparate datasources simultaneously and merge their results together into a single datasource. An example could be a normalized source system and you want to merge the normalized tables into a denormalized table.

The merge join in SSIS allows you to perform an inner or outer join in a streaming fashion. This component behaves in a synchronous way. The component accepts two sorted input streams, and outputs a single stream, that combines the chosen columns into a single structure. It’s not possible to configure a separate non-matched output.

Lookup

The lookup component in SQL Server Integration Services allows you to perform the equivalent of relational inner and outer hash joins. It’s not using the algorithms stored in the database engine. You would use this component within the context of an integration process, such as a ETL Layer that populates a datawarehouse from multiple non equal source systems.

The transform is written to behave in a synchronous manner in that it does not block the pipeline while it’s doing its work, mostly. In certain cache modes the component will initially block the package’s execution for a period of time.

Conclusion: There's only one reason for using the merge join and that is

a lack of memory, otherwise always use the lookuptransform. There's done lot of

improvement of the lookup transform in SQL Server 2008.

Case Study on

Merge Join Vs Lookup Performance - I:

TheViewMaster

wrote:

|

|

|

Case Study on

Merge Join Vs Lookup Performance - II:

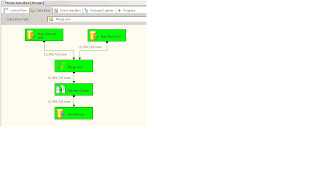

Screenshot #1

shows few points to distinguish between Merge Join transformation and Lookup transformation.

Regarding

Lookup:

If you want to

find rows matching in source 2 based on source 1 input and if you know there

will be only one match for every input row, then I would suggest to use Lookup

operation. An example would be you OrderDetails table and you want to find the matching Order Id and Customer Number, then

Lookup is a better option.

Regarding Merge

Join:

If you want to

perform joins like fetching all Addresses (Home, Work, Other) from Address table for a given Customer in the Customer table, then you have to go with Merge Join

because the customer can have 1 or more addresses associated with them.

An example to

compare:

Here is a

scenario to demonstrate the performance differences between Merge Join and Lookup. The data used here is a one to one join, which is the only scenario

common between them to compare.

1.

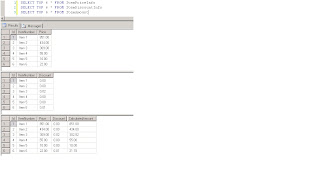

I have

three tables named dbo.ItemPriceInfo, dbo.ItemDiscountInfo and dbo.ItemAmount. Create scripts for these tables are provided under SQL scripts

section.

2.

Tablesdbo.ItemPriceInfo and dbo.ItemDiscountInfo both have 13,349,729 rows. Both the tables have the ItemNumber as the

common column. ItemPriceInfo has Price information and ItemDiscountInfo has

discount information. Screenshot #2 shows the row count in each of these

tables. Screenshot #3 shows top 6 rows to give an idea about the data

present in the tables.

3.

I created

two SSIS packages to compare the performance of Merge Join and Lookup

transformations. Both the packages have to take the information from tables dbo.ItemPriceInfo and dbo.ItemDiscountInfo, calculate the total amount and save it to the table dbo.ItemAmount.

4.

First

package used Merge Join transformation

and inside that it used INNER JOIN to combine the data. Screenshots #4

and #5 show the sample package execution and the execution duration. It

took 05 minutes 14 seconds 719 milliseconds to execute the Merge Join transformation based package.

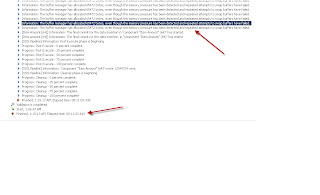

5.

Second

package used Lookup transformation

with Full cache (which is the default setting). creenshots #6 and #7

show the sample package execution and the execution duration. It took 11 minutes 03 seconds 610

milliseconds to execute the Lookup transformation based package. You might

encounter the warning message Information: The buffer manager has allocated nnnnn bytes,

even though the memory pressure has been detected and repeated attempts to swap

buffers have failed. Here is a link that talks about how to calculate lookup

cache size. During this package execution, even though the Data flow task

completed faster, the Pipeline cleanup took lot of time.

6.

This doesn't

mean Lookup transformation is bad. It's just that it has to be used wisely. I

use that quite often in my projects but again I don't deal with 10+ million

rows for lookup everyday. Usually, my jobs handle between 2 and 3 millions rows

and for that the performance is really good. Upto 10 million rows, both

performed equally well. Most of the time what I have noticed is that the

bottleneck turns out to be the destination component rather than the

transformations. You can overcome that by having multiple destinations. Here is an example that shows the implementation

of multiple destinations.

7.

Screenshot

#8 shows the record count in all the three tables. Screenshot #9

shows top 6 records in each of the tables.

Hope that

helps.

SQL Scripts:

CREATE TABLE

[dbo].[ItemAmount](

[Id] [int] IDENTITY(1,1)

NOT NULL,

[ItemNumber] [nvarchar](30)

NOT NULL,

[Price] [numeric](18,

2)

NOT NULL,

[Discount] [numeric](18,

2)

NOT NULL,

[CalculatedAmount] [numeric](18,

2)

NOT NULL,

CONSTRAINT

[PK_ItemAmount] PRIMARY KEY CLUSTERED ([Id]

ASC)) ON [PRIMARY]

GO

CREATE TABLE

[dbo].[ItemDiscountInfo](

[Id] [int] IDENTITY(1,1)

NOT NULL,

[ItemNumber] [nvarchar](30)

NOT NULL,

[Discount] [numeric](18,

2)

NOT NULL,

CONSTRAINT

[PK_ItemDiscountInfo] PRIMARY KEY CLUSTERED ([Id]

ASC)) ON [PRIMARY]

GO

CREATE TABLE

[dbo].[ItemPriceInfo](

[Id] [int] IDENTITY(1,1)

NOT NULL,

[ItemNumber] [nvarchar](30)

NOT NULL,

[Price] [numeric](18,

2)

NOT NULL,

CONSTRAINT

[PK_ItemPriceInfo] PRIMARY KEY CLUSTERED ([Id]

ASC)) ON [PRIMARY]

GO

Screenshot #1:

Screenshot #2:

Screenshot #3:

Screenshot #4:

Screenshot #5:

Screenshot #6:

Screenshot #7:

Screenshot #8:

Screenshot #9:

Source:

MSBI (SSIS/SSRS/SSAS) Online Training:

1 comment:

That's a very good post put forward by Reddy Sir.I just want to know that do you have any idea about SSIS Upsert?Thanks.

Post a Comment